AI security tools can be trusted, but not blindly.

For a UK small or medium-sized business, AI cyber security tools are useful for spotting suspicious behaviour, summarising alerts, detecting phishing, prioritising vulnerabilities and helping small teams respond faster. But they are not magic shields. They still make mistakes, miss attacks, create false alarms, depend on good configuration and can introduce privacy, legal and operational risks.

The safest answer is this:

Trust AI security tools as assistants, not as replacements for proper cyber security, human judgement, backups, access control, staff training and incident planning.

The UK National Cyber Security Centre says AI is already changing both cyber defence and cyber attacks, and Microsoft’s 2025 Digital Defense Report describes AI as a tool, threat and vulnerability at the same time. Humanity really did look at computers and decide, “What if the burglar also had automation?”

What Do AI Security Tools Actually Do?

They Look For Patterns Humans Miss

AI security tools are usually used inside products such as:

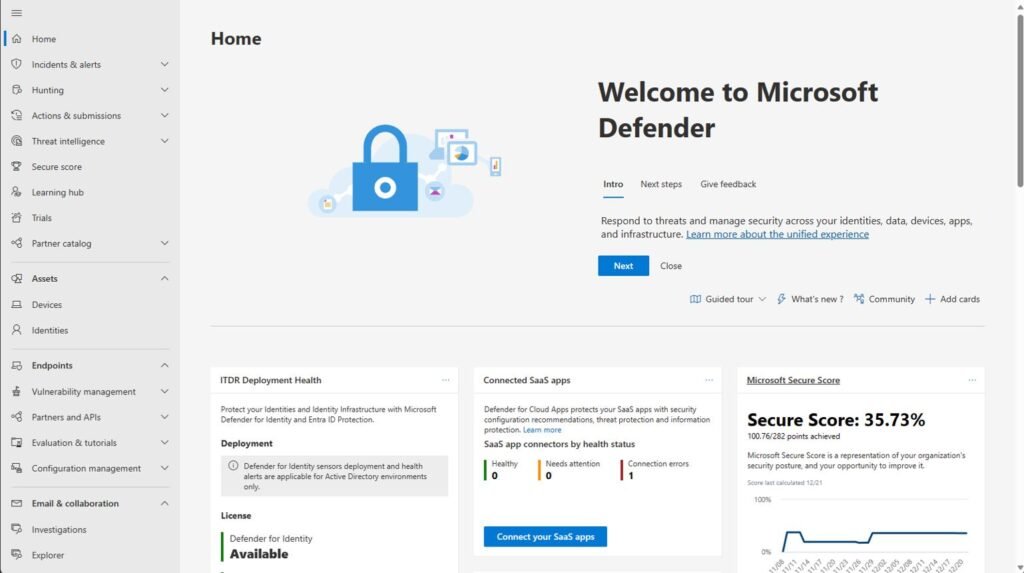

Microsoft Defender

CrowdStrike

SentinelOne

Darktrace

Sophos

Palo Alto Cortex

Cisco security tools

Google/Mandiant tools

Email security platforms

Managed Detection and Response services

They analyse things like:

Login behaviour

Email patterns

Device activity

Network traffic

File changes

Cloud activity

Malware behaviour

Unusual access attempts

For example, if a staff member normally logs in from Birmingham during office hours, then suddenly logs in from another country at 2:13am and downloads company files, an AI-assisted security tool may flag that as suspicious.

That is useful. A human alone may miss it. A traditional antivirus tool may not care. AI is better at spotting odd behaviour across lots of data.

They Help With Alert Overload

Small UK businesses do not usually have a full security team. Most have “the person who once restarted the router successfully”, which somehow becomes permanent IT leadership.

AI can help by grouping alerts, explaining risk levels and suggesting what to investigate first. This matters because cyber security tools often produce too many warnings. If everything is marked urgent, nothing is urgent.

Where AI Security Tools Are Very Useful

Phishing Detection

AI is good at spotting suspicious emails, fake login pages and unusual sender behaviour. That matters because phishing remains one of the most common ways attackers get into organisations.

AI can detect:

Lookalike domains

Suspicious attachments

Unusual writing patterns

Fake invoice emails

Credential-harvesting links

Business email compromise attempts

The NCSC has warned that AI also helps criminals create more convincing phishing emails, so defensive AI is increasingly useful, but it is not enough on its own.

Endpoint Protection

AI-powered endpoint security can detect suspicious behaviour on laptops and desktops, such as:

A file trying to encrypt lots of documents

A script running from a strange folder

A malicious attachment launching PowerShell

A user account suddenly accessing sensitive data

This is particularly useful against ransomware, where speed matters. If a tool can isolate an infected laptop quickly, it may stop one compromised machine becoming a company-wide disaster.

Cloud And Microsoft 365 Monitoring

Many UK SMEs now run on Microsoft 365, Google Workspace, Dropbox, Xero, QuickBooks, Shopify or similar cloud services.

AI tools can help detect:

Impossible travel logins

Mass file downloads

New forwarding rules in email accounts

Repeated failed login attempts

Admin privilege abuse

Suspicious OAuth app permissions

This is important because many modern attacks are not “viruses” in the old sense. They are account takeovers. The attacker logs in using stolen credentials and looks like a normal user until damage is done.

Vulnerability Management

AI can help prioritise software weaknesses. Instead of giving you a giant list of 3,000 issues, a good tool may tell you:

Which vulnerability is being actively exploited

Which system is internet-facing

Which issue affects business-critical data

Which patch should be done first

That is genuinely useful, because small businesses rarely have time to patch everything instantly.

Where AI Security Tools Cannot Be Fully Trusted

They Can Be Wrong

AI tools can produce false positives and false negatives.

A false positive is when the tool says something is dangerous but it is harmless.

A false negative is when the tool misses something dangerous.

Both are a problem.

Too many false positives cause staff to ignore alerts. False negatives give a false sense of safety. This is why AI security tools should be monitored, tuned and reviewed rather than simply installed and worshipped like some glowing digital oracle.

They Depend On Good Data

AI tools are only as good as the data they can see.

If your business has:

Poor logging

Unmanaged devices

Shared passwords

No MFA

Old software

No asset list

Personal laptops mixed with business laptops

No central email security

No backup monitoring

Then even a clever AI tool will struggle. It cannot protect what it cannot see.

They Do Not Fix Bad Security Basics

AI does not remove the need for:

Multi-factor authentication

Strong password policies

Patch management

Backups

Cyber Essentials controls

Staff training

Device encryption

Admin account control

Incident response planning

The NCSC’s advice remains clear that standard cyber security controls are foundational, including when AI or machine learning is involved.

They Can Be Attacked Too

AI systems can be manipulated. Attackers may try to trick models, poison data, hide malicious behaviour or exploit weaknesses in how the tool makes decisions.

The NCSC has published guidance on adversarial attacks against machine learning and AI, warning that AI-specific weaknesses may need mitigations beyond normal cyber controls.

The UK Legal And Compliance Problem

AI Security Tools May Process Personal Data

If an AI security tool monitors emails, login activity, files, staff behaviour or customer data, it may process personal data under UK GDPR.

That means UK businesses need to think about:

What data is collected

Where it is stored

Who can access it

Whether it leaves the UK or EEA

How long logs are retained

Whether staff are informed

Whether the supplier uses the data to train models

Whether automated decisions affect employees

The ICO has guidance on AI and data protection, including fairness, transparency and lawful processing.

Do Not Let AI Make Serious Decisions Alone

A tool that blocks a login is one thing. A tool that automatically disciplines an employee, reports someone as malicious, terminates access without review or flags someone as an insider threat is much more sensitive.

Automated decision-making rules under UK data protection law can apply where decisions have legal or similarly significant effects. Human oversight matters.

Real World Example: AI Helps, But Humans Still Matter

Scenario: A 12-Person Estate Agency

A UK estate agency uses Microsoft 365, shared calendars, cloud documents and email-heavy communication with buyers, sellers, solicitors and mortgage advisers.

An attacker steals a staff member’s password through a fake Microsoft login page.

An AI security tool might detect:

Login from a strange location

Unusual mailbox access

A new email forwarding rule

Mass access to client files

Suspicious messages sent to clients

That could stop the attack early.

But if the business has no MFA, no admin review, no backup testing and no one checking alerts, the AI tool may shout into the void. The void, predictably, does not answer support tickets.

What Would Actually Protect The Business?

MFA on all accounts

Conditional access policies

Email security filtering

Endpoint protection

Backups tested monthly

Staff phishing training

Admin accounts separated from normal accounts

Cyber Essentials alignment

Incident response plan

Supplier review of the AI tool

AI helps most when these basics are already in place.

What UK SMEs Should Ask Before Buying AI Security Tools

Is The Tool Actually AI, Or Just Marketing?

Many products now call everything AI because “advanced filtering software” apparently does not justify a premium invoice anymore.

Ask the supplier:

What does the AI actually do?

Does it detect, block, summarise, prioritise or automate?

Can we override decisions?

How often is the model updated?

What data does it inspect?

Where is the data stored?

Does our data train the supplier’s AI?

Can we export logs?

Does it integrate with Microsoft 365?

Does it support UK GDPR requirements?

Is support included?

- MICROSOFT 365 Family | Up to 6 TB of cloud storage, advanced security for your data and devices, and powerful productivi…

- PRODUCTIVITY | Redefine what’s possible with Microsoft Copilot¹ alongside you in Word, Excel², PowerPoint, and OneNote. …

- CREATIVITY | Create, design, and edit where and when you need it with Microsoft Designer and the power of generative AI….

Does It Produce Clear Alerts?

A good tool should explain:

What happened

Which user or device was involved

Why it matters

What action was taken

What action you should take next

A bad tool says “anomaly detected” and expects you to decode it like an ancient prophecy.

The Main Risks Of Trusting AI Security Tools Too Much

False Confidence

The biggest danger is thinking, “We have AI security, so we are safe.”

That is exactly how businesses end up with:

No tested backups

No MFA

Old admin accounts

Unpatched laptops

No incident response plan

Staff clicking phishing emails

A security tool nobody checks

Privacy Mistakes

If the AI tool scans staff emails, files or behaviour, the business must handle that responsibly. Staff monitoring needs transparency and proportionality.

Over-Automation

Automatic blocking can be useful, but it can also disrupt business.

Imagine an AI tool locking out your finance user during payroll week or blocking access to your booking system during peak trading. Security should reduce risk, not accidentally flatten operations.

What A Sensible UK Small Business Setup Looks Like

Basic Layer

For most UK SMEs, start with:

Microsoft 365 Business Premium or equivalent

MFA on every account

Endpoint protection on all devices

Automatic patching

Cloud backup

Password manager

Admin rights restricted

Cyber Essentials controls

Staff awareness training

AI-Assisted Layer

Then add AI-assisted security through:

Microsoft Defender features

Email threat protection

Managed detection and response

AI-based endpoint detection

Cloud login monitoring

Phishing simulation and training

Vulnerability prioritisation

Human Layer

Finally, have:

A named person responsible for cyber security

External IT or cyber support

Incident response checklist

Backup restore test

Annual cyber review

Supplier risk review

That combination is far more reliable than buying one shiny AI product and hoping it behaves like a digital superhero instead of confused software with a marketing department.

Final Verdict: Can AI Security Tools Be Trusted?

Yes, But Only With Controls

AI security tools can be trusted for:

Spotting suspicious behaviour

Reducing alert overload

Improving phishing detection

Helping prioritise threats

Speeding up investigation

Supporting small IT teams

They should not be trusted to:

Replace MFA

Replace backups

Replace Cyber Essentials

Replace staff training

Replace human judgement

Make serious decisions without review

Magically fix poor IT management

The Practical Rule For UK Businesses

Use AI security tools as a second brain, not as the only brain.

A good AI security tool can make a UK small business safer. But it must sit inside a proper security setup: MFA, patching, backups, access control, staff training, Cyber Essentials-style controls and someone responsible for responding to alerts.

AI cyber security is useful. Blind faith in it is not. That is less “modern defence strategy” and more “installing a smart lock while leaving the windows open”.

References

NCSC: AI and cyber security guidance (ncsc.gov.uk)

NCSC: Artificial intelligence cyber guidance hub (ncsc.gov.uk)

NCSC: Adversarial attacks against machine learning and AI (ncsc.gov.uk)

ICO: AI and data protection guidance (ico.org.uk)

ICO: Automated decision-making and profiling guidance (ico.org.uk)

Microsoft Digital Defense Report 2025 (microsoft.com)