Phishing — the practice of tricking individuals into revealing passwords, payment information or sensitive data — remains the single most common cybercrime in the UK.

According to the National Cyber Security Centre (NCSC), more than 80% of reported cyber incidents in 2025 began with a phishing email or text message.

Despite billions spent on security upgrades, phishing works for one key reason: it targets human psychology, not network weaknesses.

This makes it harder for both humans and Artificial Intelligence (AI) to stop completely — though AI is trying.

How AI Is Being Used to Prevent Phishing

1. Email Filtering and Threat Detection

AI‑powered filters are now standard in major email clients and workplace systems.

They analyse vocabulary, tone, structure, and embedded links to detect scams.

Tools such as Microsoft Defender and Google’s AI‑driven “Safe Browsing” system use machine‑learning models trained on millions of phishing samples.

Key features:

- Contextual analysis: AI looks for suspicious patterns such as fake invoice terminology, spoofed domain names, or urgency‑based wording (“immediate action required”).

- Image and logo inspection: Algorithms can detect counterfeit logos or subtle design inconsistencies that humans might miss.

- Real‑time behaviour analysis: AI checks if URLs redirect unusually or harvest credentials mid‑session.

The NCSC’s Suspicious Email Reporting Service, processed through AI clustering systems, flags over 100,000 phishing domains per month, demonstrating how automation speeds analysis that would otherwise bottleneck in manual inspection.

2. Natural Language Processing (NLP) for Social Engineering

Phishing scams are increasingly polished — some even generated by criminal AIs themselves.

To match this evolution, cybersecurity AI uses natural language processing to identify emotional manipulation, flattery, or scam urgency.

For example, UK fintech firms have deployed predictive models that can spot deceptive emotional tone — emails starting with “just a friendly reminder” or “polite follow‑up” — before they reach the user’s inbox.

3. Adaptive Authentication

When AI detects strange login attempts or behaviour (for example, signing in from another country or at an unusual time), it can trigger multi‑factor authentication or stricter access checks.

These systems don’t stop phishing attempts outright but limit the damage once a password is stolen by flagging that the behaviour doesn’t fit the user’s normal pattern.

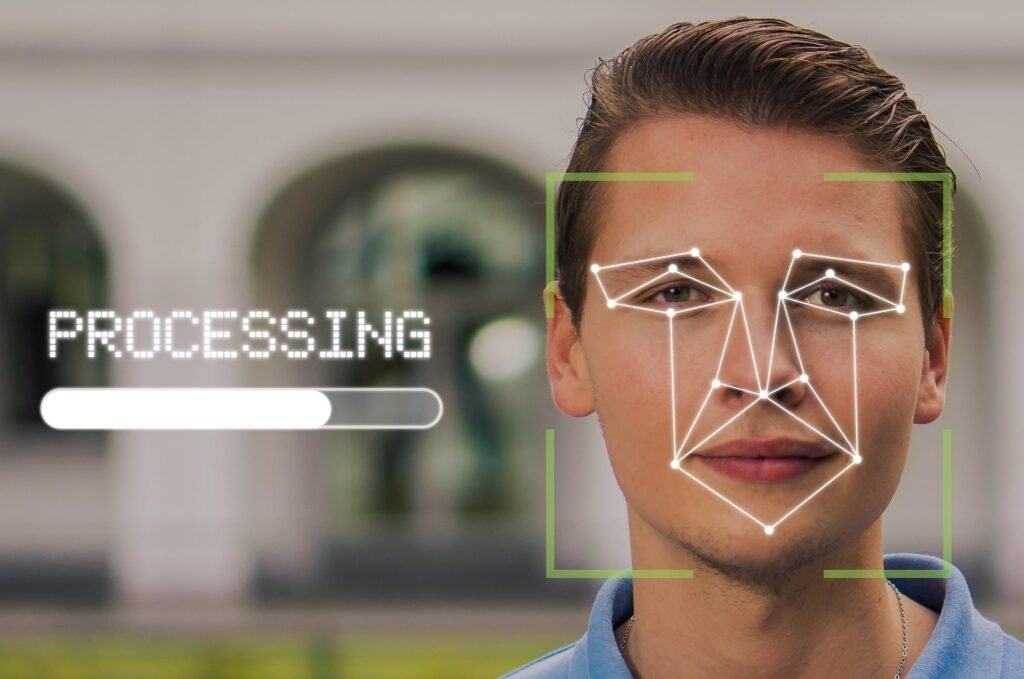

4. Image Recognition and Deepfake Detection

Visual‑AI tools are now used to counter fraudulent attachments and images in phishing emails — for instance, scanning QR codes, invoices or ID documents for digital manipulation.

Financial institutions, including Barclays and Lloyds Banking Group, deploy AI visual verification to distinguish genuine login screens from forgeries, a growing issue as scammers use deepfake branding.

Why Phishing Still Works (and AI Still Struggles)

Humans Remain the Weak Link

AI can filter and warn, but phishing nearly always ends with a human choice — clicking a link, opening a file, sending credentials.

Even if 99% of phishing attempts are stopped, the remaining 1% targeting millions of users will still succeed occasionally.

A 2024 UK Finance survey found that half of UK employees admitted they hadn’t checked sender details in a recent suspicious email — showing that training and human awareness lag behind technology.

AI vs AI: The Arms Race

Cybercriminals now use the same AI tools to generate more convincing messages that mimic legitimate writing styles.

Large‑language‑model‑based phishing kits can analyse a company’s website or social media presence and create personalised, error‑free messages.

This eliminates many of the red flags (misspellings or poor grammar) once used by both humans and AI to identify scams.

Effectively, detection AI is fighting generative AI in a digital arms race where each side learns from the other.

Privacy Laws Limit Data Sharing

UK data‑protection laws (GDPR and the Data Protection Act 2018) limit how much information companies can share about phishing patterns, even for training AI.

Without pooled, large‑scale data from every sector, detection algorithms can’t learn fast enough to cover all attack types, giving criminals an advantage.

Fragmented Defences

AI systems often operate in silos — each organisation runs its own detection algorithm, meaning insights are not shared between industries.

In the criminal world, by contrast, phishing toolkits and data‑breach databases circulate freely on dark‑web markets, keeping attackers better informed than some defenders.

What AI Could Do Better (But Often Can’t Yet)

1. Real‑Time Decision Support

Future AI systems could analyse communication context in real time — comparing a suspect email with a user’s historical exchanges to check whether the voice and intent align.

However, privacy restrictions and computing requirements currently make that level of personalisation difficult to scale securely.

2. Cross‑Sector Threat Sharing

AI collaborations between internet providers, banks, and government agencies are being discussed, but bureaucratic and privacy hurdles persist.

An NCSC pilot project with major UK banks aims to create a unified AI warning system that blocks phishing domains within minutes of first detection — potentially a major leap forward if data protection frameworks can adapt.

3. End‑User Assistance

Generative AI tutors could serve as interactive digital cybersecurity coaches inside workplace emails, explaining warnings in plain English instead of technical jargon.

For example, when a suspicious message is received, the AI might say:

“This message looks similar to 143 suspicious emails sent this week using spoofed bank addresses.”

This educates users passively while defending them, turning awareness into a continuous habit rather than an annual checkbox training session.

How Much Has AI Already Helped?

- Since launching its AI‑supported inbox filtering in 2022, Microsoft 365 claims to have reduced phishing mail reaching users by over 90%.

- UK businesses using machine‑learning email security tools report a 27% drop in successful phishing incidents(NCSC/IBM Cyber Resilience Survey, 2025).

- The NCSC’s takedown service now removes 1.4 million malicious domains each year, almost entirely powered by automated AI detection.

These are genuine improvements — but the percentage of attacks that succeed hasn’t fallen by equivalent levels, because phishing volumes keep rising faster than defences improve.

A Real‑World and Cynical View

AI is doing impressive work behind the scenes, but it’s a quiet success constantly overshadowed by human fallibility.

The technology keeps getting better; people, predictably, do not.

Most phishing protection systems are invisible — users rarely notice the thousands of emails stopped daily, only the one that slips through.

That last one, however, is all it takes to cause a ransomware breach, financial loss or reputational damage.

AI cannot change the psychology of curiosity, fear or trust, which are the emotional levers phishing attacks pull most effectively.

Until human education and AI design align — putting user behaviour at the centre, not just network defence — phishing will continue as Britain’s cheapest, simplest, and most reliable cybercrime.

References (UK‑Focused)

- National Cyber Security Centre – Phishing Threat Landscape Update, 2025

- UK Finance – Cybercrime and Consumer Awareness Survey, 2024

- Ofcom – Online Safety and Fraud Prevention Report, 2025

- Energy & Security Catapult – AI in National Cyber Resilience (2025)

- IBM / NCSC – UK Business Cyber Resilience Study, 2025

Summary

| Area | What AI Does | Limitations |

|---|---|---|

| Email & Message Filtering | Detects scams via pattern recognition | Criminals use AI to create more convincing emails |

| Behaviour Monitoring | Identifies unusual logins & activity | Limited by privacy laws & data variety |

| Real‑Time Analysis | Uses NLP to flag manipulative language | Can’t yet interpret every social cue |

| Cross‑Sector Sharing | Rapidly updates threat lists | Blocked by regulation & corporate silos |

| End‑User Education | Embeds alerts & explainers in emails | Behavioural change still slow |

In conclusion:

AI has become an essential line of defence against phishing in the UK, quietly blocking millions of attacks.

But it’s caught in a technological arms race with criminals deploying equally smart tools and exploiting human trust.

Until wider collaboration, data sharing and genuine user‑centred education catch up, AI will keep doing the hard work — effective, invisible, and never quite winning.

We have created Professional High Quality Downloadable PDF’s at great prices specifically for Small and Medium UK Businesses our main website. Which include various helpful Cyber related documents and real world scenarios your business might experience, showing what to do and how to protect your business. Find them here.