The short (annoying) truth

For a big chunk of cybercrime: yes, AI is lowering the “skill floor”. It makes scams faster, more convincing, more scalable, and easier to run by people who would previously have failed basic literacy tests. But AI doesn’t magically remove the need for real capability when criminals want reliable access to well-defended organisations, persistence, privilege escalation, and monetisation at scale.

The UK’s own security community is pretty blunt about the direction of travel: the NCSC assesses AI will increase the speed, scale and sophistication of cyber operations between now and 2027.

Where AI genuinely reduces the skills criminals need

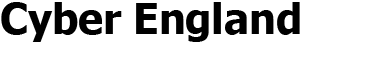

1) Phishing and social engineering: “good enough” is now easy

AI tools are excellent at writing natural-sounding messages, tailoring tone, and translating into fluent British English, which matters when you’re trying to trick staff in English organisations.

- Higher-quality scam copy at scale: criminals can generate hundreds of variants, avoid obvious spelling tells, and tailor to a target’s role (payroll, procurement, HR).

- Better pretexting: AI helps build believable stories, excuses, and “thread replies” to keep victims engaged.

- Faster “OSINT-to-message”: paste a LinkedIn profile or company website text, get a tailored lure in seconds.

The UK Government’s own frontier AI risk material states generative AI will “almost certainly… lower the barriers to entry” for less sophisticated threat actors.

2) Fraud, impersonation and “deepfake adjacent” deception

Not every scam needs Hollywood-grade deepfakes. Even voice cloning, cheap face-swap video, or AI-edited imagery can add just enough believability to push a victim over the edge. Europol has warned organised crime is using AI for impersonation and scaling scams more cheaply and efficiently.

3) Malware and tooling: less coding, more “prompting”

AI can:

- explain error messages,

- suggest code snippets,

- help glue together scripts,

- rewrite open-source proof-of-concepts into slightly different forms.

That reduces the need for some technical depth at the low end. The NCSC Annual Review notes cyber criminals are adapting models to embrace AI to increase the volume and impact of attacks.

4) Reconnaissance and target research

AI makes it easier to summarise leaked data, scrape public documents, and build target dossiers, which feeds into:

- business email compromise (BEC),

- supplier invoice fraud,

- password spraying timing,

- helpdesk social engineering.

This is especially relevant to English workplaces, where attackers rely on convincing process-language (finance approvals, supplier changes, “urgent” CEO requests).

Where AI doesn’t remove the need for serious criminal skill

1) Breaking into well-defended organisations still requires capability

Getting initial access reliably, moving laterally, escalating privileges, staying hidden, and exfiltrating data without triggering alarms still takes:

- experience with enterprise environments,

- operational security discipline,

- knowledge of defensive tooling,

- patience (a rare criminal virtue).

AI can assist, but it can’t replace the attacker’s need to understand what they’re doing when things go wrong (and they always do).

2) The “cybercrime economy” matters more than individual genius

A huge amount of modern cybercrime is modular:

- initial access brokers sell logins,

- ransomware affiliates deploy payloads,

- money launderers cash out,

- support roles handle negotiation and pressure tactics.

Europol’s IOCTA work emphasises data and access as central commodities in the cybercrime economy.

So yes, the average criminal can be less skilled, because they can buy capability.

3) AI introduces new failure modes for criminals, too

AI outputs can be:

- confidently wrong,

- inconsistent,

- traceable (reused phrasing, model fingerprints, patterns),

- risky to operational security if attackers paste sensitive details into cloud tools.

Skilled crews manage these risks. Less-skilled ones get caught, scammed by other criminals, or simply waste time.

What this means for English targets at home

Households: the biggest “AI uplift” is psychological, not technical

Expect more:

- highly convincing “HMRC”, “Royal Mail”, “bank fraud team” messaging styles,

- emotionally manipulative scripts,

- personalised lures using scraps of data from breaches.

This is classic crime with better writing, better timing, and more volume. The NCSC assessment focuses on AI increasing effectiveness of cyber operations in the near term.

What this means for English targets at work

Workplaces: AI boosts the scams that exploit process and trust

The sweet spot for criminals is still human workflow:

- invoice changes,

- payroll diversion,

- supplier “new bank details” requests,

- IT helpdesk resets,

- exec impersonation.

AI doesn’t need to hack anything if it can talk someone into doing the attacker’s work.

Bottom line for 2026

So, do cyber criminals need less skill?

Yes for entry-level cybercrime and fraud: AI lowers the barrier, improves the “professionalism” of scams, and increases volume.

No for high-impact intrusions: serious attacks still need experience, infrastructure, and criminal supply chains.

In other words: AI is making cybercrime more accessible, like giving everyone a megaphone. It doesn’t make everyone a genius. It just makes the noise louder.

We have created Professional High Quality Downloadable PDF’s at great prices specifically for Small and Medium UK Businesses our main website. Which include various helpful Cyber related documents and real world scenarios your business might experience, showing what to do and how to protect your business. Find them here.